Art is a subjective experience. Just like those hippie artists to fly in the face of the millenia old of tradition[1] of putting things in order so that we might judge one another. As we know that the average human being is quite likely to go around enjoying just any old piece of art that they find appealing without requiring a full understanding of the work’s place in society, history, and artistic development, it is extremely important that we regularly convene panels of experts to tell us what is good and important. The only other option is chaos. And, as everyone knows from post-apocalyptic novels, chaos always leads to eating babies. The American Film Institute has made a cottage industry out of producing ranked list of mostly American films, providing a convenient framework to demonstrate that almost all arguments over cinematic preference stem from the other person being a cultural Philistine[2]. Vanity Fair has now weighed into the fray of artistic judgment with “Architecture’s Modern Marvels”, a ranked list of the “most important works of architecture created since 1980”.

What, if anything, do these ranked lists tell us about works of art?

Vanity Fair built their list of Architecture’s Modern Marvels by surveying 52 experts for the five[3] architectural projects since 1980 that they consider most important. This created a list of 132 “Modern Marvels” that received at least one vote.

The list rankings would have us believe that rank #1 translates to the “most important work of architecture since 1980”. Similarly, rank #50 would translate to the “50th most important work”, with works getting progressively less important as we go down the list. But, that is not how we do things round here.

Round here, we always stand up straight.

Round here, something radiates.

Round here we talk like lions,

But we sacrifice like lambs.

Round here, she’s slipping through my hands.

-“Round Here” by Counting Crows (August and Everything After)[4]

Whoa. Sorry about that folks. Welcome to my brain. At least this is not an audio format. But, that isn’t how we do things round here. Just kidding. About the music. It really isn’t how we do things around here.

For example, the Vanity Fair list places the Parc de la Villette as tied for 13th most important, making it look much more important than the Saint-Pierre Church at 22nd most important, not to mention the unnamed Rank #132 building. The rankings disguise the fact that very little separates these three in the eyes of the experts. Rank #13 equaled only 3 votes and represents a 9-way tie. Rank #22 required 2 votes. And, essentially everything else got 1 vote. So, despite having 132 works on the list, the rankings only go 23 ranks deep.

There appear to be three general rules of the expert survey generated list of importance in pop-media (i.e., the Top N list):

- Never define importance.

- Define ranks by selecting the votes column in your Microsoft Excel spreadsheet and choosing DATA>SORT>DESCENDING. If necessary to resolve ties, you may sort the names column alphabetically.

- Define list of N “top” or “most” [insert adjective] [insert noun], where N is arbitrary number either based on base 10 convenience or column space. Never base N on actual consideration of “significance” of voting totals.

Instead, we (or at least I) want to know two things:

- Out of the large number of architectural works built since 1980, how large did the hypothetical set of potentially important works need to be in order to get the observed list (n=132)?

- How many votes are required to demonstrate an opinion amongst the “experts” that a work is more important than a random distribution of votes among the set of potentially important works from above (1)?

Because Vanity Fair describes their voting process, a reasonable simulation of the procedure can be created[5]. The simulation has a pool of experts (E) individually make x random and non-repeating votes (sampling without replacement) for works from a set of potentially important works (N) to generate a list of important architectural works (n), where each work (i) has a number of votes (vi).

Question 1 from above can then be expressed as what Ne (size of potentially important works) is required to give n=132. We are essentially asking how large a set of potentially important works do the experts act like they were voting for randomly, even though we do not actually propose that such a set really exists (this concept is somewhat analogous to the concept of an effective population size[6]). First, let’s observe how n changes as a function of Ne.

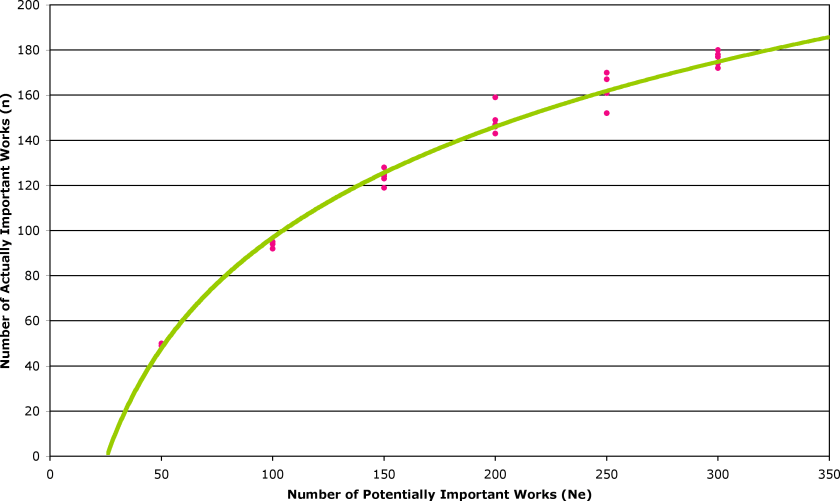

In Figure 1, the pink circles represent individual simulations (as a random process there is variability) for different values of N. The green line represents the trend line through these data (R2=0.99), from whose representative equation we can calculate the Ne required to get n=132 on average.

n=70.94*ln(Ne)-229.81

We find that Ne=164 should give n=132 on average (n=131.2±2.4, from 5 simulations). So, now we know that the hypothetical set of Potentially Important Works contains ~164 architectural achievements. While we have to concede that this set is just a convenience, I cannot help but extend my speculation to thinking that the list of 164 possibles says more about what is important (to the experts) than the list of 132 that were actually voted on.

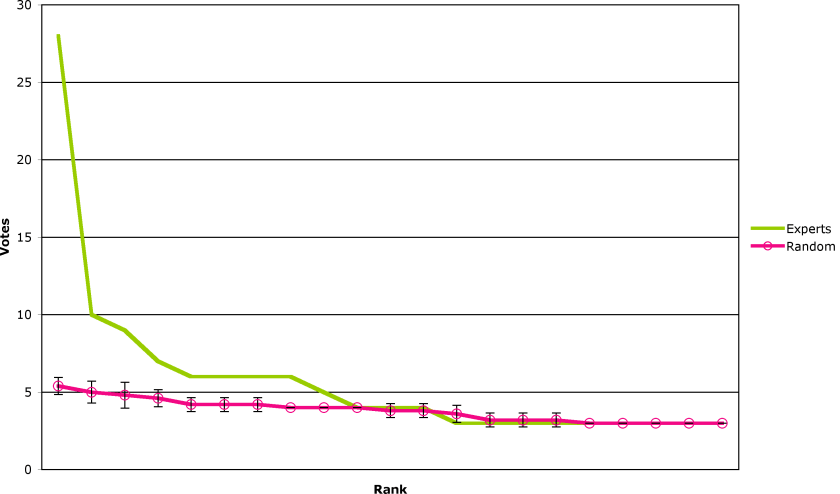

Now, we are equipped to address Question 2 from above. Given the hypothetical set of 164 potentially important works of architecture needed to produce a randomly voted list of 132 actually important works of architecture, we want to know how many votes are needed to distinguish a real preference for a work, as opposed to a random distribution of votes (i.e., no real preference). We can get a superficial look at this issue by comparing the top 21 vote-getters according to the experts and the random simulation (Figure 2), which shows that the expert voting becomes indistinguishable from random around rank #10 or 4 votes.

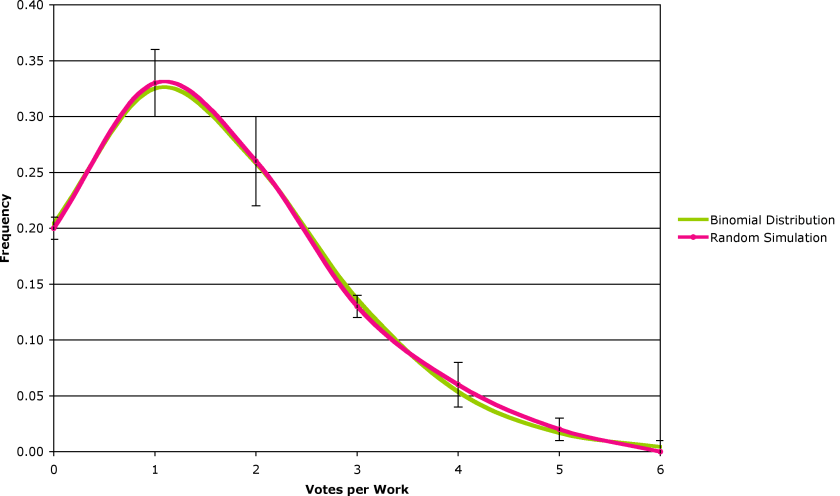

We can, however, put a more formal face on this analysis. If we pick items at random from a set with replacement (i.e., we can pick the same item more than once), the frequency with which we pick items generates a binomial distribution. Vanity Fair‘s voting method is slightly different, as each expert makes five picks without replacement, but between experts the picks are “with replacement”. In practice, this makes little difference, as the simulated votes match up very well with a binomial distribution (Figure 3).

Conveniently, the binomial distribution allows us to calculate the probability of an individual vote total occurring by chance. This allows us to say how confident we are that the vote total for a work of architecture reflects its significant importance amongst the set of potentially important works. For any vote total where P<0.05 (without correction[9]), that the vote total shows a statistically significant difference (95% confidence) from the binomial distribution and is, therefore, unlikely to have been produced by chance (Figure 4).

In Figure 4, the pink line represents the probability of seeing a particular vote total in a binomial distribution with the characteristics of our set. The green line represents a probability of 0.05. The point at which the pink line dips below the green line is the point at which we see significance. As the votes only come in integers, the answer is five. Five votes[10] are needed to distinguish a real preference on the part of the experts from random voting on the set of potentially important works. Concerns about significance reduce our list of really important architectural works from 132 to just 9:

1. Guggenheim Museum (Bilbao, Spain) – 28 votes

2. Menil Collection (Houston, TX, USA) – 10 votes

3. Thermals Baths (Vals, Switzerland) – 9 votes

4. HSBC Building (Hong Kong) – 7 votes

5. Seattle Central Library (Seattle, WA, USA) – 6 votes

5. Mediatheque Building (Sendai, Japan) – 6 votes

5. Neue Staatsfgalerie (Stuttgart, Germany) – 6 votes

5. Church of the Light (Osaka, Japan) – 6 votes

9. Vietnam Veterans Memorial (Washington, DC, USA) – 5 votes

So, we now know that the Guggenheim is really, really important. The Menil Collection and Thermal Baths are pretty important. The HSBC Building is most likely important, and the other five may be important, although one may be a false positive.

The take home message is this: the experts can’t agree on what’s important often enough to make it look like they are doing much more than guessing randomly. Neither should you. What’s important is whether the artwork appeals to your aesthetic sense, makes you think, or inspires you.

NOTES

- Some even argue this tradition is even older than acupuncture, but that is just silly, where that is acupuncture.

- Does the fact that I always need the spell checker in order to spell Philistine correctly mean that I am a Philistine? Incidentally, negative stereotypes of fascinating historical cultures and their modern descendants are acceptable, if they are derived from Biblical roots. Right?

- Being rule-bending artistic types, not all experts felt constrained by the number 5.

- Rendered from memory. Any errors are due to being hit in the head throughout my twenties. Yes, I went to high school in the 90s.

- The code for the Perl scripts used to generate the random “surveys” is available as PDFs, although completely free of useful comments to make them usable, as I did this over lunch, in a hurry. For a script that fixes the number of “marvels” picked, see buildings.pl. For a script that starts with a potential pool of marvels to be picked from, see buildings2.pl.

- The effective population size represents the population size necessary to explain the population genetics of the population, but is not assumed to represent the census population size.

- Technically, pseudorandom as the scripts use the standard Perl rand() function.

- This analysis itself is a bit simplistic. A large number of votes for one item (e.g., rank #1, The Guggenheim Museum in Bilbao, Spain, received 11% of total votes cast – 28 of 52 experts) could skew the random distribution for the other 131 works. In addition, the maximum number of votes for a work has an upper bound of v=52. These considerations reinforce my argument that drawing conclusions from these types of surveys is complicated.

- If one does correction for multiple hypothesis testing, one would require P<0.0003. In this case, I used the Bonferroni correction.

- If one corrects for multiple hypothesis testing[9], then 8 votes would be required.